Using the adabag and gbm packages

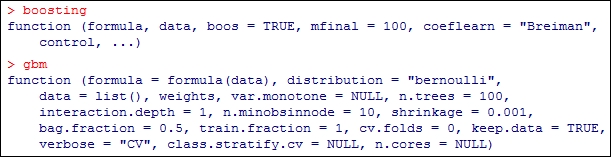

Using the boosting method as an ensemble technique is indeed very effective. The algorithm was illustrated for classification and regression problems from scratch. Once the understand the algorithm clear and transparent, we can then use R packages to deliver results going forward. A host of packages are available for implementing the boosting technique. However, we will use the two most popular packages adabag and gbm in this section. First, a look at the options of the two functions is in order. The names are obvious and adabag implements the adaptive boosting methods while gbm deals with gradient boosting methods. First, we look at the options available in these two functions in the following code:

The boosting and gbm functions

The formula is the usual argument. The argument mfinal in adabag and n.trees in gbm allows the specification of the number of trees or iterations. The boosting function gives the option of boos, which is the bootstrap sample of the...