Other variants of LSTMs

Though we mainly focus on the standard LSTM architecture, many variants have emerged that either simplify the complex architecture found in standard LSTMs or produce better performance or both. We will look at two variants that introduce structural modifications to the cell architecture of LSTM: peephole connections and GRUs.

Peephole connections

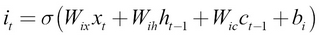

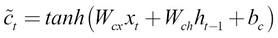

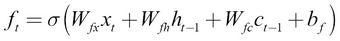

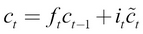

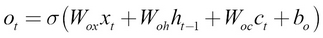

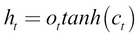

Peephole connections allow gates not only to see the current input and the previous final hidden state but also the previous cell state. This increases the number of weights in the LSTM cell. Having such connections have shown to produce better results. The equations would look like these:

Let's briefly look at how this helps the LSTM perform better. So far, the gates see the current input and final hidden state, but not the cell state. However, in this configuration, if the output gate is close to zero, even when the cell state contains important information crucial for better performance, the final hidden state will be close...