Deep approaches for RNN

The core principle of deep learning to improve the representative power of a network is to add more layers. For RNN, two approaches to increase the number of layers are possible:

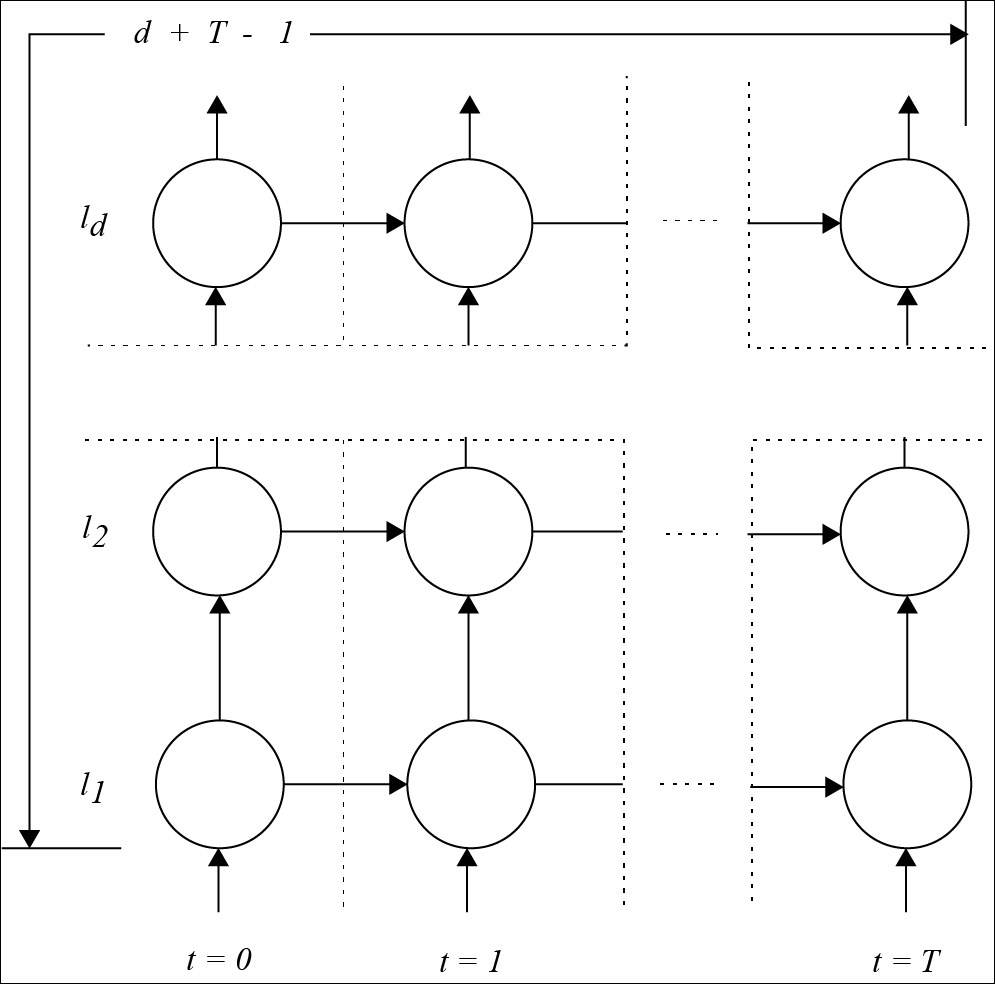

The first one is known as stacking or stacked recurrent network, where the output of the hidden layer of a first recurrent net is used as input to a second recurrent net, and so on, with as many recurrent networks on top of each other:

For a depth d and T time steps, the maximum number of connections between input and output is d + T – 1:

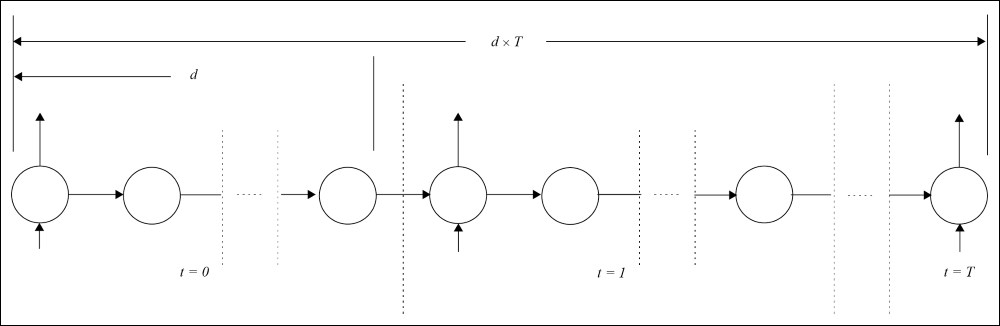

The second approach is the deep transition network, consisting of adding more layers to the recurrent connection:

Figure 2

In this case, the maximum number of connections between input and output is d x T, which has been proved to be a lot more powerful.

Both approaches provide better results.

However, in the second approach, as the number of layers increases by a factor, the training becomes much more complicated and unstable since the signal fades or explodes...