Configure HDFS cache

In Hadoop, centralized cache management is an explicit mechanism for caching the most frequently used files. Users can configure the path to be cached by HDFS, which prevents them from being evicted from memory. Namenode is responsible for coordinating all the Datanode caches in the cluster and periodically receives a cache report.

Getting ready

For this recipe, you will again need a running cluster with at least the HDFS daemons running in the cluster.

How to do it...

Connect to the

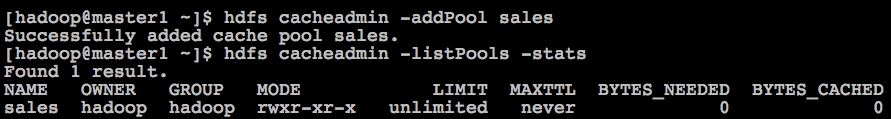

master1.cyrus.commaster node and switch to userhadoop.The first step is to define a cache pool, which is a collection of cache directives. Refer to the following command and screenshot:

$ hdfs cacheadmin -addPool sales

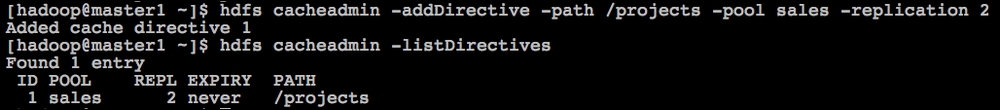

Then, we need to define a cache directive, which can be a path to a directory or a file:

$ hdfs cacheadmin -addDirective -path /projects -pool sales -replication 2

Load a test file to the cached directory and see how the parameters change as shown in the following screenshot...