Principal Component Analysis

Another common approach to the problem of reducing the dimensionality of a high-dimensional dataset is based on the assumption that, normally, the total variance is not explained equally by all components. If pdata is a multivariate Gaussian distribution with covariance matrix Σ, then the entropy (which is a measure of the amount of information contained in the distribution) is as follows:

Therefore, if some components have a very low variance, they also have a limited contribution to the entropy, providing little additional information. Hence, they can be removed without a high loss of accuracy.

Just as we've done with FA, let's consider a dataset drawn from pdata ∼ N(0, Σ) (for simplicity, we assume that it's zero-centered, even if it's not necessary):

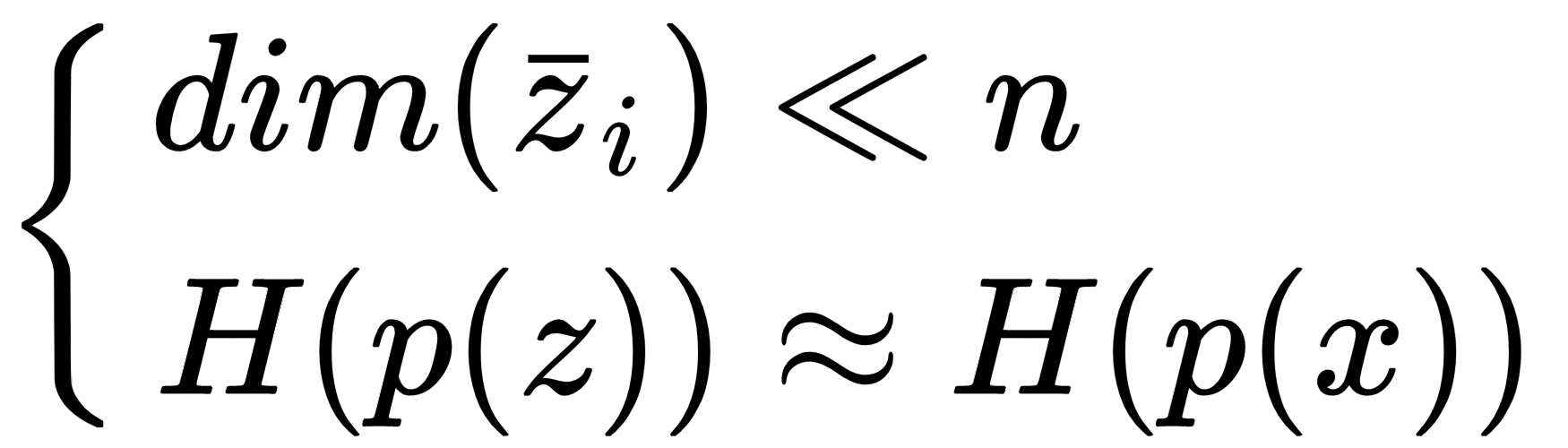

Our goal is to define a linear transformation, z = ATx (a vector is normally considered a column, therefore x has a shape (n × 1)), such as the following:

As we want to find out the directions where the variance...