Spark – packaging and API

Now that the readers have been well acquainted with the architecture and basic data flow of Spark, in the following section we will take the journey to the next step and get the users acquainted with the programming paradigms and APIs that are used frequently to build varied custom solutions around Spark.

As we know by now, the Spark framework is developed in Scala, but it provides a facility for developers to interact, develop, and customize the framework using Scala, Python, and Java APIs too. For the context of this discussion, we will limit our learning to Scala and Java APIs.

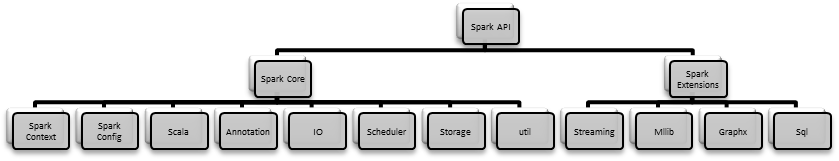

Spark APIs can be categorized into two broad segments:

- Spark core

- Spark extensions

As depicted in the preceding diagram, at high level, the Spark codebase is divided into two packages:

- Spark extensions: All API's for the particular extension are packaged in their own package structure. For example, all API's for Spark Streaming are packaged in the

org.apache.spark.streaming.*package and the...