User-defined functions

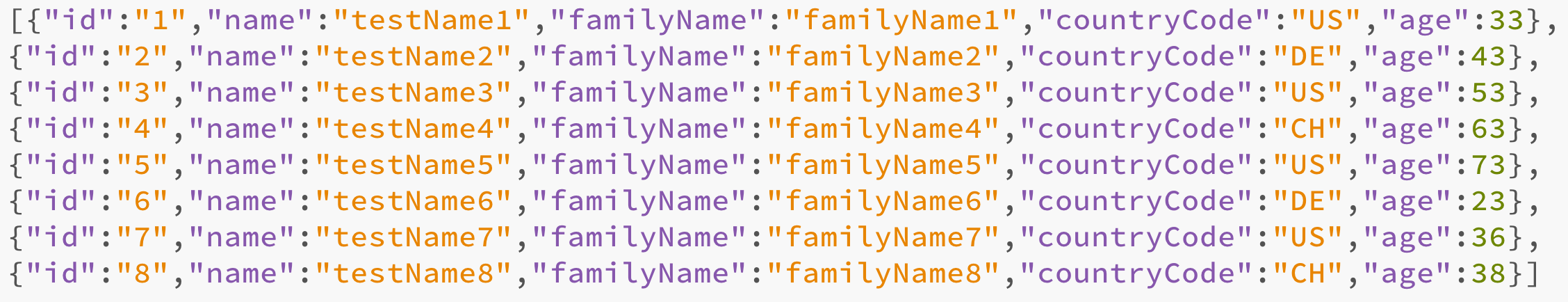

In order to create user-defined functions in Scala, we need to examine our data in the previous Dataset. We will use the age property on the client entries in the previously introduced client.json. We plan to create an UDF that will enumerate the age column. This will be useful if we need to use the data for machine learning as a lesser number of different values is sometimes useful. This process is also called binning or categorization. This is the JSON file with the age property added:

Now let's define a Scala enumeration that converts ages into age range codes. If we use this enumeration among all our relations, we can ensure consistent and proper coding of these ranges:

object AgeRange extends Enumeration {

val Zero, Ten, Twenty, Thirty, Fourty, Fifty, Sixty, Seventy, Eighty, Ninety, HundretPlus = Value

def getAgeRange(age: Integer) = {

age match {

case age if 0 until 10 contains age => Zero

case age if 11 until 20 contains age ...