What is Spark Streaming?

At its core, Spark Streaming is a scalable, fault-tolerant streaming system that takes the RDD batch paradigm (that is, processing data in batches) and speeds it up. While it is a slight over-simplification, basically Spark Streaming operates in mini-batches or batch intervals (from 500ms to larger interval windows).

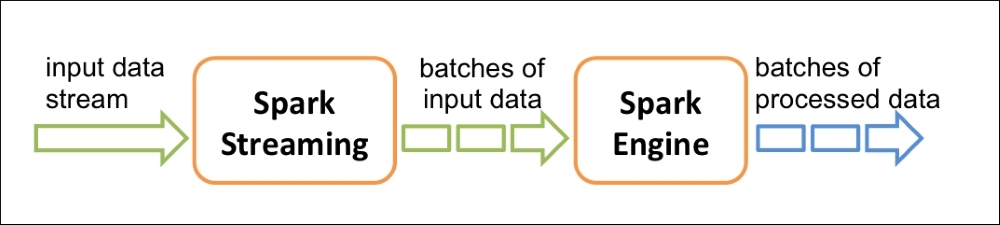

As noted in the following diagram, Spark Streaming receives an input data stream and internally breaks that data stream into multiple smaller batches (the size of which is based on the batch interval). The Spark engine processes those batches of input data to a result set of batches of processed data.

Source: Apache Spark Streaming Programming Guide at: http://spark.apache.org/docs/latest/streaming-programming-guide.html

The key abstraction for Spark Streaming is Discretized Stream (DStream), which represents the previously mentioned small batches that make up the stream of data. DStreams are built on RDDs, allowing Spark developers to work within the...